ISSN: 2292-8588

Volume 40, No. 2, 2025

Navigating Online Learning and Artificial Intelligence: Identifying and Managing Assessment Risks in Private Higher Education in South Africa

ISSN: 2292-8588 - Volume 40, Issue 2, 2025

https://doi.org/10.55667/10.55667/ijede.2025.v40.i2.1385

Abstract: This paper investigates assessment integrity risks in online distance learning programmes at a private higher education institution in South Africa and the strategies used to manage them. Guided by an interpretivist lens, the research applies the Committee of Sponsoring Organisations of the Treadway Commission (COSO) Enterprise Risk Management (ERM) framework and sociotechnical systems theory to explore how technological, behavioural, and institutional factors intersect to influence academic integrity. Data from staff questionnaires reveal three interrelated dimensions of risk: emergent (artificial intelligence-driven misconduct and integrity threats), behavioural (unethical and dishonest practices), and structural (technological barriers and infrastructural limitations). Synthesised through the Emergent, Behavioural, and Structural risks addressed through Tools, Practices, and Training (EBS-TPT) model, the research highlights the importance of integrating digital tools and ethical training within proactive, design-oriented assessment strategies. Rather than relying solely on detection mechanisms, institutions should foster artificial intelligence (AI) literacy, ethical awareness, and authentic assessment design to sustain credibility in digital learning environments. The paper contributes a conceptual framework and practical insights for higher education institutions seeking to balance technological innovation with academic integrity in the evolving AI era.

Keywords: online distance learning, academic integrity, assessment dishonesty, risk management protocols, private higher education.

This work is licensed under a Creative Commons Attribution 3.0 Unported License.

Naviguer dans l'apprentissage en ligne et l'intelligence artificielle: identification et gestion des risques d'évaluation dans l'enseignement supérieur privé en Afrique du Sud

Résumé : Cet article examine les risques liés à l’intégrité des évaluations dans les programmes d’apprentissage à distance en ligne d’un établissement privé d’enseignement supérieur en Afrique du Sud, ainsi que les stratégies mises en œuvre pour les gérer. S’appuyant sur une approche interprétativiste, l’étude mobilise le cadre de gestion des risques d’entreprise COSO (ERM) et la théorie des systèmes sociotechniques afin d’explorer comment les facteurs technologiques, comportementaux et institutionnels interagissent et influencent l’intégrité académique. Les données recueillies auprès du personnel révèlent trois dimensions interdépendantes du risque : émergent (manquements à l’intégrité favorisés par l’IA), comportemental (pratiques malhonnêtes et non éthiques) et structurel (barrières technologiques et limitations infrastructurelles). Synthétisées dans le modèle EBS-TPT (Emergent, Behavioural and Structural risks addressed through Tools, Practices and Training) ces dimensions soulignent l’importance d’intégrer les outils numériques et la formation éthique dans des stratégies d’évaluation proactives et centrées sur la conception. Plutôt que de se reposer uniquement sur les mécanismes de détection, les établissements devraient promouvoir la littératie en matière d’IA, la conscience éthique et la conception d’évaluations authentiques afin de préserver la crédibilité des apprentissages numériques. L’article propose ainsi un cadre conceptuel et des recommandations pratiques pour aider les établissements d’enseignement supérieur à concilier innovation technologique et intégrité académique à l’ère de l’IA.

Mots-clés : Enseignement à distance, intégrité académique, fraude à l'évaluation, protocoles de gestion des risques, enseignement supérieur privé.

Research shows that the pandemic-driven shift to online business operations has heightened exposure to cyber-risks such as data breaches, impersonation, and fraud (Dolgui & Ivanov, 2020; Habib & Hamadneh, 2021). In higher education, institutions that already offered or hurriedly moved academic programmes online have reported spikes in assessment misconduct and other unethical behaviour, creating serious compliance and reputational vulnerabilities (Bayram & Tikman, 2022; Khalil & Er, 2023; Clarke et al., 2023). The simultaneous explosion of easily accessible artificial intelligence (AI) tools compounds these worries: lecturers and administrators now face more sophisticated e-cheating and struggle to craft assessments that credibly demonstrate learning in online settings (Verhoef & Coetser, 2021). As a result, doubts about the authenticity of student work have intensified across the sector, especially in private higher education institutions, which are often criticised for prioritising financial sustainability over academic integrity (Stander & Herman, 2017). This has major implications for the academic integrity and sanctity of qualifications offered by private higher education institutions in a technologically driven world.

The unplanned transition towards online remote (distance) learning has presented new challenges for academic integrity in higher education generally (Gamage et al., 2020; Verhoef & Coetser, 2021). Also, in developing countries, the accelerated transition to online learning has introduced numerous challenges in safeguarding academic integrity (Mutongoza & Olawale, 2022). According to Holden et al. (2021), the management of learning activities in the online environment, designed to widen access to education, may have inadvertently created opportunities for academic misconduct. Plagiarism and other forms of academic dishonesty practices have become more frequent and perhaps more difficult to address (Mutongoza & Olawale, 2022).

Today, new technologies and systems designed to increase student engagement, collaboration, and seamless access to information may have created new avenues for assessment malpractices or academic dishonesty within higher education institutions (Mutongoza & Olawale, 2022). The proliferation of user-friendly AI tools, especially large language models such as OpenAI’s ChatGPT, which can generate complete responses for students, illustrates this trend (Cotton et al., 2024). Although these tools can broaden access to information and facilitate learning engagements, they also raise pressing concerns about academic honesty and ethics (Khalil & Er, 2023; Bin-Nashwan et al., 2023). These tools have the potential to enable and conceal dishonest or unethical academic practices, given that it is difficult to differentiate between human and machine generated writing (Cotton et al., 2024; Khalil & Er, 2023). Similarly, research by Artyukhova et al. (2024) reveals that, while AI tools can enhance personalised learning and educational access, they may contribute significantly to academic misconduct, including plagiarism and the misuse of AI-generated content. Ray (2023) also postulates that large language models like ChatGPT may generate texts that are not always accurate or reliable and may also be used to create false information or impersonate individuals (Jones & Bergen, 2024). As a result, Cotton et al. (2024) recommend that universities carefully examine the risks and opportunities afforded by AI technologies and tools and ensure that they are used ethically for learning. To this end, the authors recommend that policies and procedures for the use of these tools be developed, alongside staff training not only on detecting and preventing academic dishonesty, but also on designing and implementing authentic teaching and assessments that foster integrity in learning.

The development or deployment of technologies in higher education institutions can therefore be considered an opportunity risk. Opportunity risks arise when an organisation seeks new opportunities or possibilities for achieving strategic objectives and generating new ways of development and revenue growth (Ivascu & Cioca, 2014). However, Hopkin and Thompson (2022) explain that investing in opportunity risk might inhibit the achievement of set objectives, if the outcomes are negative. In other words, taking an opportunity risk could expose a company to other forms of risks. Given this, poor management of educational technologies and tools may constitute opportunity and compliance risk for higher education institutions, with unintended outcomes. Such outcomes could damage the overall quality of education, compromise merit, and lead to loss of capital (Malik et al., 2021). Failure to address these risks may negatively impact the institution’s reputation, as students and sponsors may become sceptical of associating with an institution that has a questionable reputation (Malik et al., 2021), and it may also impact regulatory approvals.

These challenges underscore the need for the present research. We assess existing assessment-risk management protocols (both policies and day-to-day practices) and evaluate how effectively they protect academic integrity in online distance learning environments. By clarifying what works, the research aims to reduce compliance vulnerabilities while harnessing the benefits of educational technologies. Accordingly, this paper analyses the risk management measures adopted by a South African private higher education institution and explains how they promote academic integrity across their distance learning programmes.

The institution offers programmes in two modes: blended contact learning and distance learning. During the research period, the latter was known as “online distance learning,” but it has since been referred to as “distance learning” to reflect its largely independent format with limited synchronous sessions. The institution comprises eight schools across four South African campuses and support centres in South Africa and Namibia; all schools except one, offer distance learning programmes. Of the roughly 45,000 enrolled students, nearly 65% study through the distance learning mode.

The literature review section that follows covers academic integrity in higher education institutions online distance learning contexts, and assessment management risk protocols.

The literature on academic integrity emphasises its fundamental role in maintaining the quality and reputation of educational institutions worldwide. Defined by values such as responsibility, respect, honesty, fairness, and trust (Macfarlane et al., 2014), academic integrity is not merely about avoiding dishonest behaviours like plagiarism or cheating, but also involves a proactive commitment to ethical learning and behaviour (Gamage et al., 2020). Upholding academic integrity enhances institutional reputation, influences student choices, and contributes to the quality of graduates in society (Nabaho & Turyasingura, 2019; Guerrero-Dib et al., 2020).

Conversely, a lack of academic integrity undermines trust in higher education systems, leading to perceptions of producing underqualified professionals and instilling distorted values (Rumyantseva, 2005 cited in Nabaho & Turyasingura, 2019). This issue is particularly evident in some developing countries, including South Africa, where unethical practices like corruption have been reported (Ngcamu & Mantzaris, 2023). The challenges in promoting academic integrity in these contexts highlight broader problems with governance and enforcement of policies within universities. Addressing these issues requires comprehensive approaches such as training, collaboration with stakeholders, and holding accountable those involved in corrupt practices (Ngcamu & Mantzaris, 2023).

In conclusion, while academic integrity is universally acknowledged as crucial for the success and credibility of higher education institutions, its effective implementation requires addressing systemic challenges and fostering a culture where ethical behaviour is prioritised and enforced (Garcia-Villegas et al., 2016 cited in Guerrero-Dib et al., 2020).

The rise of online distance learning has revolutionised access to education, making it more flexible, affordable, and accessible, especially in the wake of technological advances and the COVID-19 pandemic (Christensen et al., 2013; Sadeghi, 2019; Zarzycka et al., 2021; Spiers et al., 2018). This mode allows students to learn remotely, often reducing the financial and logistical burdens of traditional education (Abu Ali, 2024; Najjar et al., 2025). However, the shift to online learning has simultaneously raised significant concerns about academic integrity, with increased opportunities for dishonest behaviours like cheating, plagiarism, and unauthorised collaboration (Verhoef & Coetser, 2021).

Academic integrity, defined by Gamage et al. (2020), encompasses six core values: honesty, trust, fairness, respect, responsibility, and courage. These core values guide ethical academic conduct. However, the abrupt transition to online learning during the pandemic compromised these principles, particularly with assessments (Amzalag et al., 2021; Holden et al., 2021). As Holden et al. (2021) highlight, the ease of Internet access during exams posed a significant threat, with students often searching for answers online. Similarly, Amzalag et al. (2021) caution that online assessments may inadvertently enable unethical behaviours, exacerbating the integrity challenge.

The emergence of AI tools has further intensified concerns about academic integrity, with some students using these tools to bypass assessments (Sullivan et al., 2023). Such developments challenge the integrity of online assessments, requiring concerted efforts from educational institutions and stakeholders to preserve the principles of academic integrity, even in a digital landscape (Verhoef & Coetser, 2021).

The literature on academic integrity emphasises the ongoing challenge of increasing academic dishonesty despite existing protocols and efforts to promote integrity in assessment settings (Holden et al., 2021; Nabaho & Turyasingura, 2019). Nabaho and Turyasingura (2019) emphasise the role of internal and external quality assurance in mitigating corruption and unethical practices in higher education institutions, suggesting that the effectiveness of external quality assurance depends on its alignment with institutional objectives and methodologies.

Similarly, Guerrero-Dib et al. (2020) and Holden et al. (2021) advocate for proactive measures within higher education institutions to cultivate a culture of integrity. Strategies include discussing the importance of academic honesty with students, implementing ethical codes and compliance programmes, and, where needed, employing technologies like online proctoring to discourage cheating in online environments (Guerrero-Dib et al., 2020; Holden et al., 2021).

Furthermore, Mutongoza and Olawale (2022) highlight specific strategies adopted by universities in Botswana, Zimbabwe, and South Africa to combat academic dishonesty, such as varying exam versions of scripts and using plagiarism detection software. They stress the importance of assessment practices that encourage the application of knowledge rather than mere recall of facts (Holden et al., 2021).

Ghias et al. (2014), suggest that despite these efforts, there remains a gap in research on the efficacy of these interventions and the motivations behind their adoption. They furthermore explain that the severity of penalties for academic dishonesty, the clarity of institutional policies, and the consistency in enacting such policies are crucial in shaping students' compliance with ethical standards.

Thus, while various strategies exist to address academic dishonesty, further research is needed to assess their impact and refine approaches to ensure their effectiveness in diverse and rapidly evolving educational landscapes. Creating an environment that discourages cheating and promotes integrity requires a multi-faceted approach involving institutional policies, technological tools, and educational practices that promote ethical behaviour among students and staff.

Building on the gaps and challenges identified in the literature, the paper focuses on the risks associated with assessment integrity in online distance learning and the institutional measures employed to address them. It therefore aims to analyse and interpret risk management protocols in assessment within a private higher education institution offering online distance learning, with the goal of informing policies that uphold academic integrity in these programmes.

The paper is guided by two main research questions:

This section sets out the research paradigm and research design, data collection methods, and data sampling and analysis. It concludes with data verification and research ethics.

Guided by an interpretivist research paradigm, the paper is underpinned by the Committee of Sponsoring Organisations of the Treadway Commission (COSO) Enterprise Risk Management (ERM) framework and sociotechnical systems theory. According to Cohen et al. (2018), an interpretivist paradigm focuses on understanding the subjective experiences and perspectives of individuals. In interpretivist research, reality is seen as socially constructed, emphasising the importance of exploring participants' interpretations and contexts (Kivunja & Kuyini, 2017). The COSO ERM framework provides a structured approach for identifying, assessing, managing, and monitoring risks to create, preserve, and realise organisational value (Mthiyane et al., 2022). It views ERM as a multidirectional and iterative process, wherein each element influences and interacts with the others (Hopkin & Thompson, 2022). The framework comprises eight components: internal environment, objective setting, event identification, risk assessment, risk response, control activities, information and communication, and monitoring.

For the purpose of this paper, four of these components—namely, event identification, risk assessment, risk response, and control activities—were applied to examine the main risks associated with assessment and academic integrity in online distance learning programmes within the private higher education institution. These components also guided the analysis of the risk management protocols used to mitigate and manage such risks. In this paper, risk mediation corresponds to the risk response element of the COSO ERM framework, which encompasses strategies such as avoiding, accepting, transferring, or managing the risk (Hopkin & Thompson, 2022).

To further strengthen the analysis, the paper also draws on sociotechnical systems theory as a theoretical lens. Sociotechnical systems theory posits that organisational outcomes are shaped by the interdependence of social systems (people, policies, cultures) and technical systems (digital tools, infrastructures, processes). Rather than treating technology as a neutral solution, this perspective highlights how risks emerge when misalignments occur between human actors and technological structures (Kudina & Van de Poel, 2024; Trist & Bamforth, 1951). In the context of online distance learning, sociotechnical systems theory highlights how infrastructural limitations, epistemic access, and the adoption of AI tools can interact with institutional cultures and governance mechanisms to produce new vulnerabilities or opportunities for academic integrity.

By combining the interpretivist paradigm, sociotechnical systems theory, and the COSO ERM framework, the paper is positioned to capture both the lived experiences of stakeholders and the systemic interplay of social and technical dimensions of assessment integrity.

An exploratory qualitative research approach was employed in collecting data from 14 participants who were purposively selected. The participants are staff and key stakeholders in online distance learning with considerable lecturing and managerial experience in different formats at the institution.

Two methods of data collection were employed in this research, namely document analysis and open-ended questionnaires. Document analysis involves a systematic examination of written materials to extract meaningful insights about the research topic (Wach & Ward, 2013). Maree (2016) emphasises that document analysis focuses on various forms of written communication that reveal the phenomenon under investigation. On the other hand, Züll (2016) explains that open-ended questionnaires are a data collection method where respondents are required to formulate their responses in their own words, either verbally or in writing. Aligned with Züll (2016), respondents were not guided by predefined response categories, which allowed for more freedom and depth in their answers.

In this research, document analysis was initially employed to extract data from institutional policy documents related to assessment and academic integrity. The insights gained from this analysis informed the development of open-ended qualitative questionnaires and guided subsequent data collection efforts. By employing both methods, the paper aimed to gather comprehensive data that could be cross-validated and to deepen understanding of the risk research topic (Maree, 2016). Thus, the combination of these methods allowed for a deeper understanding of the risks prevalent in online distance learning, as well as the risk management protocols employed by the institution to address them.

The following institutional policy documents on academic integrity were selected and analysed:

As set out in Table 1, the research targeted key academic stakeholders, including the Dean of Teaching and Learning and three Heads of School from the schools that offer distance learning programmes (the School of Education, School of Commerce, and School of Administration and Management). Additionally, two Academic Managers of the School of Law and eight lecturers from the School of Education participated.

| Distance Learning Stakeholder Groups | No. of Stakeholders | Schools & Programmes | No. of Stakeholders |

|---|---|---|---|

| Lecturers | 8 | Education (HC, Diploma, Degree Postgrad) | 7 |

| Academic Managers | 2 | Law (HC, Diploma, Degree & Postgrad) | 2 |

| Head of School | 1 | Management and Administration (HC, Advanced Cert, Degree) | 2 |

| Head of School | 1 | Commence (Degree & Postgrad) | 1 |

| Head of School | 1 | Education (HC, Dip, Degree & Postgrad) | 1 |

| Dean of Teaching, Learning and Student Success | 1 | Head Office (Policy Formation & Regulation) | 1 |

| Grand Total | 14 | 14 |

The paper adopted a purposive sampling approach, aligned with qualitative research traditions, to select participants and documents relevant to the research focus on academic integrity in online distance learning. Purposive sampling involves selecting participants based on specific characteristics that are deemed crucial to the research objectives (Du Plooy-Cilliers et al., 2014). In this case, participants were chosen for their expertise and involvement in issues related to plagiarism, cheating, and academic integrity in online education. The sampling included two main units of analysis: policy documents and human participants.

The paper employs qualitative methodologies to analyse participants' views on the phenomenon. The data was transcribed, sorted, and broken down into smaller components for detailed examination and understanding. Patterns were identified, coded, and categorised to generate themes aligned with the research questions. Both thematic and content analysis were applied: thematic analysis for qualitative open-ended questions and content analysis for institutional policy documents.

The data analysis process followed the Creswell and Creswell (2018) guidelines of systematically organising and interpreting qualitative data in line with participants’ perspectives. The first author began by transcribing and carefully reading through the collected data, breaking it down into smaller, manageable units for deeper understanding (Maree, 2016). Patterns were identified, coded, and grouped into categories, which were then developed into broader themes aligned with the research questions. This process was guided by the thematic analysis framework of Braun and Clarke (2012), which provides a systematic, six-phase approach to identifying, analysing, and reporting patterns within qualitative data.

Given the research design and the use of two different data sources, both thematic and content analysis were employed. Thematic analysis was applied to the qualitative open-ended questionnaire responses. This involved repeated reading of the data, exporting responses into Microsoft Excel and Word, and using tables for data display and reduction. The first author conducted inductive coding manually, highlighting recurring phrases and assigning colour codes to similar meanings (Cohen et al., 2018). These codes were aggregated into categories, which were further developed into themes addressing assessment risk management protocols in online distance learning.

Content analysis, on the other hand, was applied to institutional policy documents. A latent content analysis approach was chosen, as it enabled the first author to go beyond literal meanings and interpret the underlying intentions and implications of the texts (Kleinheksel et al., 2020). This method supported the interpretive orientation of the research by uncovering implicit meanings related to plagiarism and academic integrity.

By combining thematic and content analysis, the research was able to generate themes from participants’ lived experiences, while also interpreting institutional texts. This dual approach ensured a comprehensive analysis, integrating both subjective experiences and documentary evidence to answer the research questions.

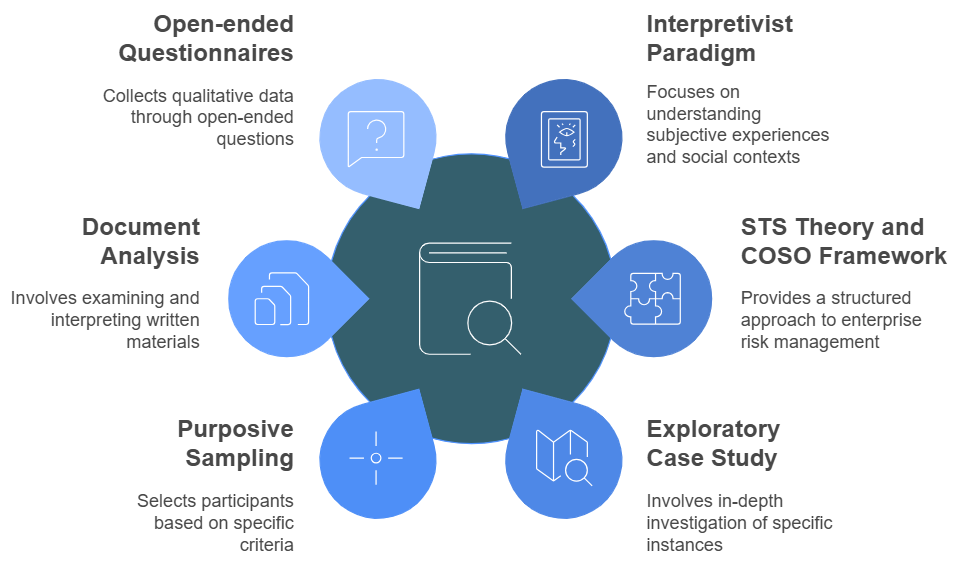

Data verification enhances the accuracy and reliability of the findings, strengthening the paper’s rigour. According to Spiers et al. (2018), this process involves systematic checks to maintain alignment between data, analysis, and interpretation. To ensure data verification, a sample that best represents the research phenomena was selected, achieving effective saturation of categories. The collected data and findings were critically reviewed by both authors to enhance validity and accuracy. A summary of the research design and methods is captured in Figure 1 below.

Figure 1. Summary of research design and methods (Created using Napkin.ai [2025]). Image description available.

Ethical clearance and formal permission were obtained from the institution’s research office. Informed consent was sought from participants, ensuring they understood the requirements, identity protection, and usage of research findings. Ethical guidelines, as stated by Du Plooy-Cilliers et al. (2014), were adhered to, ensuring clarity, openness, and transparency. Participant anonymity was maintained throughout the research process, allowing them to participate willingly and freely. The authors employed OpenAI’s ChatGPT o3 (OpenAI, 2025) solely for linguistic refinement—specifically, shortening sentences and enhancing coherence. No AI-generated text was accepted without author verification.

The findings and discussion section that follows is structured around the main risks associated with assessment and academic integrity, and the risk management protocols for assessment. The next section presents the findings related to the first research question:

The rapid cultural shifts in the educational landscape, particularly technological advancement and integration, identified as challenges in literature (Ali et al., 2024) were also found to be relevant for the higher education institutions. Thus, the risk management protocols adopted by the institution were meant to address the risks related to technological advancement, as well as dishonest academic practices such as plagiarism and cheating. In addition, the data generated from the open-ended questionnaire showed that some students at the institution battled with access. In this context, access relates to the complex interplay of epistemic and infrastructural access. Thus, not having access to the right or suitable type of technology for learning and not knowing how to use the available technology to learn, emerged strongly as risks.

AI-enabled academic misconduct is the first risk identified by the participants as being present in online distance learning programmes at the institution. The participants indicated that the rapid change in the education landscape due to AI technology is a risk to assessment in online learning.

In the words of P1, we find this to be a risk as it compromises the academic integrity of assessments.

“I think many people believe that with the changing landscapes of education, artificial intelligence is something that can be useful in education; however, for me I find this to be a risk and challenge associated with assessment in online learning. I find this to be a risk as it compromises the academic integrity of assessments. For example, in an academic essay, students produce AI-generated work that is not authentic and original. Whilst Turnitin may flag this, it is very hard to determine and therefore assessments become very difficult to authenticate.” (P1)

This finding corresponds with Alsharefeen and Al Sayari (2025), who highlight that the rise of AI tools complicates academic integrity in online education. Their study emphasises the necessity of clear ethical guidelines and policies to mitigate AI-enabled misconduct, ensuring preservation of genuine learning and academic standards.

This perspective was confirmed by P8, who stated that the use of novel AI technology like ChatGPT enables cheating and by implication constitutes an assessment risk:

“The recent advent of AI sources such as ChatGTP, Bard, and similar sources has opened a significant new category of cheating because students type the questions into ChatGPT, which then can provide a variety of versions of an answer. Students select the relevant answers, make minor cosmetic adjustments, and present them as their efforts. Being aware that providing sources of reference at face value confirms no plagiarism, students commit fraud by providing references that do not exist, or do not relate to or contain, the information referenced.” (P8)

Based on the above findings, it can be concluded that rapid AI-enabled academic misconduct represents a significant challenge in the context of online distance learning. While AI tools may have afforded people the opportunity to access seamless information (Chen et al., 2024; Ali et al., 2024), using such tools in an unethical manner compromises the integrity of assessment, thereby causing reputational damage to institutions of learning (Rodrigues et al., 2024). This finding affirms earlier research by McHaney et al. (2016) which found that modern technology has made it easier for students to engage in assessment malpractices. Some of the new technologies like software, sharing, and mobile tools facilitate cheating in online learning environments (Rodrigues et al., 2024).

While AI-enabled misconduct represents a new and rapidly evolving threat to academic integrity, it is important to recognise that these practices often intersect with more traditional forms of dishonesty already present in online learning environments. The emergence of AI tools has not replaced plagiarism, collusion, or contract cheating; rather, it has amplified and diversified them by providing new mechanisms for unethical behaviour. Therefore, the next theme examines broader patterns of unethical and dishonest behaviour that persist within online distance learning, regardless of technological innovation.

The data shows that dishonest behaviour or practices in the form of plagiarism collusion and contract cheating are rife in the online learning space, as suggested by P2:

“Plagiarism & cheating. Students generally have a high plagiarism percentage or it is clear that they copied from one another. A recent problem has been that students buy their assignments from external parties & submit it as their own.” (P2)

Similarly, P8 stated that assessment risks and challenges associated with online learning programmes frequently occur due to the nature of the online distance learning mode, which leaves students unsupervised when preparing formative assessments. The participant explained that students share solutions to questions and copy from one another. Furthermore, as indicated by P8, some students engage third parties to complete tasks for them:

“The Internet, emails, and other technology-based communication platforms such as WhatsApp and Instagram are available. Telegram and many others permit students to easily extract, copy, and paste information they deem relevant and appropriate from Internet sources or by forming and/or joining student groups. Cheating is, therefore, rife and represents a significant risk assessment category when students prepare formative assessments. Students collude by preparing, sharing, or copying answers developed jointly or severally in these groups. Unscrupulous third parties form business entities that purport to provide student study assistance to students. These services include contract cheating, whereby these entities or individuals provide pre-prepared answers to assessments to students at a set fee. Many times, unbeknownst to each other, students purchase the same answers and then present these identical answers as their efforts.” (P8)

Furthermore, P8 suggested that students engage in dishonest practices such as sharing answers, and using large language models and paraphrasing tools:

“Collaboration on individual online tests, usually through sharing of answers/discussion on student WhatsApp groups. Plagiarism of written assignments, and the use of large language models and paraphrasing tools. (P8)”

This shows that plagiarism, collusion, and contract cheating continue to thrive within online learning spaces, a reality echoed by Pike et al. (2025) who describe how students exploit the unsupervised nature of online assessments to share answers or commission third parties to complete their work. This observation corresponds closely with participants’ views of answer-sharing on WhatsApp groups and use of outsourcing services. Furthermore, research by Tang et al. (2025) highlights how the rise of AI tools, including large language models and paraphrasing software, has amplified the challenge of maintaining academic integrity. These technologies enable students to produce plagiarised or misrepresented content with greater sophistication (Sun et al., 2025). Thus, the intersection of technological advancements and traditional misconduct practices presents a significant risk to academic integrity in online education, demanding multi-faceted and proactive institutional responses. Collectively, these findings underscore the urgency for institutions to implement clear integrity policies, robust detection mechanisms, and teaching and assessment designs that reduce opportunities for cheating. Additionally, addressing the emotional and motivational factors driving such behaviours among students is essential, reaffirming the need for a comprehensive approach combining technological, pedagogical, and ethical strategies.

While unethical behaviour and academic dishonesty reflect individual or collective choices, they are often intertwined with broader systemic constraints. The following section therefore explores technological barriers and infrastructure limitations as underlying risks that shape both learning and assessment integrity in online distance education.

The data reveals that some students in online distance learning programmes have limited access to the type of technology suitable for online learning, but some may not have the knowledge to use technology for learning. This aligns with the view that, while technological advancements broaden access to information and educational materials in higher education (Ali et al., 2024), they also have the potential to exacerbate existing inequalities. As indicated by P9, this undermines one of the core purposes of assessment, namely, to support learning through effective feedback systems (Mutongoza & Olawale, 2022). This aligns with broader findings on the digital divide in African higher education, where unequal access to devices and connectivity exacerbates exclusion and frustration among students (Azionya & Nhedzi, 2021).

“There are many risks and challenges that can negatively impact the integrity of the formal assessments. The first biggest challenge is technical problems or connection issues that may occur during the exam. Another student also struggled to open the test because he was in the Eastern Cape and it was heavily raining with thunderstorms. The signal was insufficient and had to drive to Queenstown to be able to attempt the online test. Again, this links to technology as students are using their cell phones and do not always see the methods of feedback on their cell phones.” (P9)

A similar view was expressed by P10:

“Many students are using their cell phones to submit assignments. Many students are not computer literate and struggle with LMS systems and manoeuvring around them. Wi-Fi: This is not easily accessible to students.” (P10)

Evidently, the lack of access to learning technologies and limited knowledge in the use of technology for learning presents itself as a risk to assessment, given that students are not able to complete assessments as and when they are due. Similar challenges have been documented in South Africa, where rural and urban distance learners experience differing levels of digital access, shaping their learning opportunities and assessment participation (Lembani et al., 2020). Consequently, some may resort to unauthorised means, including outsourced services and the use of large language models or other online tools, to compensate for their lack of digital competence. Technological and infrastructural limitations can indirectly contribute to integrity risks by creating conditions of frustration, exclusion, or inequitable access. When students struggle with connectivity, inadequate devices, or limited digital literacy, their capacity to participate ethically and confidently in assessments may be compromised. This corresponds with research conducted in higher education institutions elsewhere, which identified lack of knowledge and access to suitable technology as a risk to academic integrity in online learning environments (Muhammad et al., 2020; Ray, 2023; Perkins, 2023; Miles et al., 2022; Sevnaravan & Maphoto, 2024). This shows that this challenge is not unique to South African higher education institutions.

The following section presents the findings related to the second research question:

For the risk management protocols and strategies identified in this research to be improved to strengthen the maintenance of academic integrity of assessment in online distance learning programmes at the institution, the data revealed that policy makers must consult broadly and offer support to policy implementers and students. This could enhance their understanding of the content and rationale for its development. As conceived in the COSO ERM framework, the internal environment must set the foundation of how risk is viewed, and the foundation influences the risk appetite and ethical values within the organisation (Hopkin & Thompson, 2022). Similar calls have been put forward by scholars such as Chen et al. (2024) who advocated for the establishment of international collaboration frameworks to enable the exchange of best practices and development of unified ethical standards for AI in educational settings.

Additionally, the data revealed that both those that enforce the policies and those that are governed by those policies must be offered support to enable them to achieve their potential. The support could be in the form of technological deployment and continuous training.

This finding indicates that students and staff are required to complete a compulsory academic integrity course to uphold the integrity of assessments within the institution. Implementation of such mandatory AI ethics and integrity training for researchers and academic staff and students fosters an in-depth understanding of potential AI misuses and promotes ethical practices amongst researchers (Bin-Nashwan et al., 2023; Cotton et al., 2024), as echoed by P10:

“We make sure that all students complete the academic integrity policy short course, so that they may know what to do and what not to do. Also raise awareness of the consequences of plagiarism.” (P10)

The view that students and staff are trained to maintain academic integrity was echoed and confirmed by participants 11, 13, and 14:

“Guiding students on academic integrity—the Academic Integrity Course on Canvas is compulsory for students and staff to complete and they sign a plagiarism pledge. Promoting a culture of integrity in all aspects of student life and encouraging lifelong learning and individual responsibility for own learning.” (P 11)

“Ensuring academic integrity in online distance learning programs involves the implementation of various measures. Here are some common strategies for Identity Verification (authenticity through means of photos added in assessments or videos), Clear Academic Integrity Policies.” (P13)

“[The Institution] also have very clear steps to prevent plagiarism and cheating. Students are required to complete an academic integrity course.” (P14)

All four participants stressed the need for compulsory short courses on academic integrity for students and staff. This aligns with institutional policy, which requires repeat offenders to retake the course, and shows the participants’ strong grasp of assessment regulations. In the AI era, such training is essential to sustain a culture of integrity (Nnorom, 2025; Moya et al., 2023). Pratschke (2024) argues that AI ethics and integrity training should move beyond compliance to cultivate proactive digital and AI literacy among educators and students. She emphasises that fostering a culture of human–AI co-design and critical awareness better prepares learners to engage ethically with intelligent technologies, rather than relying solely on detection and penalty mechanisms. Furthermore, this finding highlights a strong alignment between institutional policies and their practical implementation related to academic integrity.

The data shows that the institution deploys and uses institutional assessment and academic governance policies as a risk management protocol to maintain academic integrity. For instance, P11 indicated that there are policies and procedures that govern the development of assessment tasks and ensure that students are aware of academic integrity and the consequences of cheating and similar misbehaviour. This perspective was echoed by other participants, for example:

“The institution has an official Assessment Policy, Plagiarism Policy and a student Conduct Policy that intend to address, among other things, assessment risks. Students are informed of these policies and have access to them, and Stadio also provides training to students concerning the contents of these and other policies and regulations.” (P9)

Additionally, the data reveals that students are required to sign a declaration as a way of authenticating their assignment submission and understanding that cheating is a punishable offense, as explained by P10:

“In addition, I have other strategies to ensure that I minimize cheating on assessments. In all my assessments I add a statement that says, ‘By submitting this assignment, I unequivocally state that all work is entirely my own and does not violate Institutional Academic Integrity policy’ and I ask students to sign this prior to submitting each assignment. This helps in making sure that students are aware that cheating is a punishable offense.” (P10)

Taking it further, P14 suggests that the institution ensure that academic integrity of assessment is maintained through an assessment authentication process, which includes getting students to stamp and sign their submissions at the magistrate court:

“The institution has a policy on authenticity. Assessments are authenticated. E.g., in the Legal Skills modules a student must get a stamp and signature of the closest magistrate’s court (with the detail of the specific court). The court official must confirm the attendance of the student.” (P14)

Standard operating procedures, submission-authentication measures, and related governance policies form core safeguards for assessment integrity. Students are not only informed of these rules but receive training on them. Within the COSO ERM framework, this well-structured internal environment aligns with the institution’s vision to effectively manage assessment risks. Nonetheless, the rapid adoption of AI by education stakeholders, particularly students, outpaces the development of related courses and policies at the institutional level (Artyukhov et al., 2024). This disparity creates a three-way conflict among institutional management, academics, and students, driven by the lack of clear rules governing AI use in education. As a result, academic integrity may be compromised in the implementation of AI (Rodrigues et al., 2024). Thus, there is a pressing need to establish ethical guidelines, promote awareness, uphold transparency and accountability, ensure human oversight, and engage in global collaboration with experts (Nnorom, 2025)

Furthermore, the findings revealed that assessment authentication tools and diversified assessment practices are used as risk management protocols to maintain academic integrity in online distance learning programmes, as stated by P1:

“I generally use Turnitin as a measure which flags plagiarism and AI. It may not always be accurate with AI, but as much as possible, I do implement policy by flagging these issues with students.” (P1)

Similarly, P9 and P11 indicated that Turnitin is used by the institution to detect academic dishonesty, such as plagiarism, through flagging similarity:

“Furthermore, the institution uses Turnitin to submit formative assessments. This system includes the facility to detect and report possible plagiarism and cheating. A recent addition to the system is detecting the possible use of AI.” (P9)

“Use of plagiarism detection software—Turnitin—to identify similarity % and possible use of AI, which is then investigated further by markers.” (P11)

The above findings align with research by Susnjak and McIntosh (2024), who suggest that the proliferation of AI tools in the education sector necessitates adopting measures, such as advanced proctoring systems and more sophisticated multi-modal exam questions, to mitigate potential academic misconduct enabled by AI technologies. In addition, the findings reveal that academics depend on Turnitin as a primary tool for detecting instances of academic dishonesty, as suggested by P8:

“The institution uses Turnitin that indicates the similarity of students’ work against that of other academic works and student submissions. Turnitin also indicates the possibility of students using AI to compile their submissions.” (P8)

This confirms that the use of AI detection tools can assist educators to discern subtle patterns that differentiate human from AI-generated work (Rane et al., 2024). However, as Pratschke (2024) cautions, an overreliance on detection technologies risks framing AI purely as a threat to integrity, rather than as a catalyst for pedagogical redesign. She advocates that institutions prioritise proactive, design-oriented assessment strategies that integrate AI literacy and authentic, process-based evaluation, rather than focusing solely on detection and punishment. Furthermore, the result shows that diversified assessment practices are employed as a means of maintaining academic integrity of assessment in the institution’s online distance learning programmes:

“For me personally, I try to make assignments quite reflective and personal, which makes the use of AI difficult. I also explore a variety of innovative ways to assess and authenticate assessments. For example, I try to include video presentation components in assignments and in-venue tests as well.” (P1)

Echoing this, P3 explained that assessments are set up in such a way that questions differ from one student to the other and the tasks are timed:

“I use a large question bank (hundreds of questions) for each study unit to make online tests more authentic, with random questions selected, randomised MCQ answer positions, and a strict time limit.” (P3)

This finding supports the argument that the use of digital assessment and diversification of assessment will emphasise the process of learning over the product and help to curb the misuse of AI in educational settings (Rane et al., 2024). Agreeing, P11 stated that diversified assessment by way of randomisation of questions is used as an assessment risk management protocol to ensure that the integrity of assessment is maintained:

“Design of assessments—randomisation of questions—to ensure students get different questions so limiting cheating opportunities, timed assessments so students find it difficult to seek help from each other or search for answers online, open-book type/application assessments so students apply their knowledge and use higher order skills such as evaluation and critical-thinking.” (P11)

Randomising questions gives each student a unique set, thereby reducing cheating and encouraging deeper learning. Together with text-matching software and multiple test versions, now standard across South African universities, this approach safeguards assessment integrity (Mutongoza, & Olawale, 2022). This demonstrates that the institution where this study was conducted aligns with practices implemented by other higher education institutions to combat academic dishonesty and uphold academic integrity. The above findings are summarised in Figure 2 below, which represents the Emergent, Behavioural, and Structural risk addressed through Tools, Practices, and Training (EBS-TPT) model.

Figure 2. EBS-TPT model for assessment: Risk factors and risk response strategies (Created using Napkin.ai [2025]). Image description available.

?This paper investigates assessment integrity risks in a private higher education institution’s online distance learning programmes and reviews the strategies used to manage them. Rapid technological change and rising academic dishonesty, exacerbated by epistemic gaps and infrastructural shortfalls, emerge as the main threats to assessment integrity. Institutional policies and digital tools constitute the core mitigation measures.

Findings are synthesised in the EBS-TPT model (Figure 2), which underscores the importance of integrating digital tools ethically to safeguard the credibility of academic qualifications in online environments. Drawing on the first author’s master’s dissertation, the paper recommends ongoing training for both students and staff on the responsible use of technology in assessment, thereby strengthening academic integrity across digital learning contexts.

To structure its analysis, the research employs the COSO ERM framework, a systematic lens for identifying, assessing, and managing threats to academic integrity. This approach illustrates how institutions can curb misconduct while optimising the pedagogical benefits of digital technologies. Special attention is paid to the ambivalent role of AI tools such as ChatGPT, which serve simultaneously as powerful learning aids and potential facilitators of dishonesty. By foregrounding this duality, the paper adds nuance to current debates on the ethical integration of AI in higher education.

A key limitation of this research is the absence of student voices, particularly those enrolled in online distance learning programmes. Their perspectives are essential for a more comprehensive understanding of the challenges and effectiveness of such learning environments, and their exclusion limits the depth of the findings. Furthermore, the sample size is small and could have promoted a specific viewpoint. Also, given that this is a qualitative case study, the results are context-specific and cannot be generalised to other higher education institutions in South Africa, or abroad. Nonetheless, the insights offered may be valuable for comparative analysis and inform future research in similar settings.

A central contribution of this research is its appraisal of the institution’s current safeguards for assessment integrity, similarity detection software (to point out possible plagiarism), secure authentication of submissions, varied assessment designs, and mandatory academic integrity training for students and staff. The findings confirm that these measures are critical to cultivating a culture of ethical learning and accountability in online settings.

The paper therefore calls for ongoing institutional adaptation: policies and governance mechanisms must evolve alongside new technologies and ever-shifting forms of misconduct. It also urges international collaboration to craft shared ethical guidelines for AI in higher education, ensuring that technological advances reinforce rather than erode academic integrity. Pratschke (2024) similarly contends that sustaining academic integrity in the age of generative AI demands a shift from reactive control toward proactive co-design. She argues that educators and institutions should embed ethical awareness, digital literacy, and collaborative AI engagement within assessment design—fostering trust, transparency, and human agency rather than relying solely on detection technologies.

By examining the intersection of technological innovation, policy enforcement, and ethics, the research extends the literature on academic integrity in digital learning. The EBS-TPT model presented here offers actionable guidance for institutions striving to balance opportunity with risk in online learning settings. Sustaining integrity in this evolving landscape requires a shift from reactive control measures toward proactive, design-oriented practices that cultivate ethical awareness, digital literacy, and responsible engagement with intelligent technologies. Future research should test the long-term efficacy of these measures, incorporate the student voice, and explore novel assessment models that align with the realities of digital learning environments.

Abu-Ali, A. (2024) The effect of e-learning in the digital age. Creative Education, 15(12), 2486–2498. https://doi.org/10.4236/ce.2024.1512151

Ali, O., Murray, P. A., Momin, M., Dwivedi, Y. K., & Malik, T. (2024). The effects of artificial intelligence applications in educational settings: Challenges and strategies. Technological Forecasting and Social Change, 199(123076), 1. https://doi.org/10.1016/j.techfore.2023.123076

Alsharefeen, R., & Al Sayari, N. (2025). Examining academic integrity policy and practice in the era of AI: A case study of faculty perspectives. Frontiers in Education, 10, 1621743. https://doi.org/10.3389/feduc.2025.1621743

Amzalag, M., Shapira, N., & Dolev, N. (2021). Two sides of the coin: Lack of academic integrity in exams during the Corona pandemic, students' and lecturers' perceptions. Journal of Academic Ethics, 20(2), 243–263. https://doi.org/10.1007/s10805-021-09413-5

Artyukhov, A., Wołowiec, T., Artyukhova, N., Bogacki, S., & Vasylieva, T. (2024). SDG 4, Academic integrity and artificial intelligence: Clash or win-win cooperation? Sustainability, 16(19), 8483. https://doi.org/10.3390/su16198483

Azionya, C. M., & Nhedzi, A. (2021). The digital divide and higher education challenge with emergency online learning: Analysis of tweets in the wake of the COVID-19 lockdown. Turkish Online Journal of Distance Education, 22(4), 164–182. https://doi.org/10.17718/tojde.1002822

Bali, A. O., & Rached, K. (2023). Online education via media platforms and applications as an innovative teaching method. Education and Information Technologies, 28(1), 507–523. https://doi.org/10.1007/s10639-022-11188-0

Bayram, H., & Tikman, F. (2022). Determining student teachers’ rates of plagiarism during the distance education and investigating possible reasons for plagiarism. Turkish Online Journal of Distance Education, 23(1), 210–236. https://doi.org/10.17718/tojde.1050398

Bin-Nashwan, S. A., Sadallah, M., & Bouteraa, M. (2023). Use of ChatGPT in academia: Academic integrity hangs in the balance. Technology in Society, 75, 102370. https://doi.org/10.1016/j.techsoc.2023.102370

Braun, V., & Clarke, V. (2012). Thematic analysis. In H. Cooper, P. M. Camic, D. L. Long, A. T. Panter, D. Rindskopf, & K. J. Sher (Eds.), APA handbook of research methods in psychology (Vol. 2, pp. 57–71). American Psychological Association. https://psycnet.apa.org/doi/10.1037/13620-004

Chen, Z., Chen, C., Yang, G., He, X., Chi, X., Zeng, Z., & Chen, X. (2024). Research integrity in the era of artificial intelligence: Challenges and responses. Medicine, 103(27), e38811. http://dx.doi.org/10.1097/MD.0000000000038811

Christensen, C. M., Horn, M. B., & Staker, H. (2013). Is K-12 blended learning disruptive? An introduction to the theory of hybrids. Clayton Christensen Institute for Disruptive Innovation. https://files.eric.ed.gov/fulltext/ED566878.pdf

Clarke, O., Chan, W. Y. D., Bukuru, S., Logan, J., & Wong, R. (2022). Assessing knowledge of and attitudes towards plagiarism and ability to recognize plagiaristic writing among university students in Rwanda. Higher Education, 85(2), 247–263. https://doi.org/10.1007/s10734-022-00830-y

Cohen, L., Manion, L., & Morrison, K. (2018). Research methods in education (8th ed.). Routledge.

Cotton, D. R. E., Cotton, P. A., & Shipway, J. R. (2024). Chatting and cheating: Ensuring academic integrity in the era of ChatGPT. Innovations in Education and Teaching International, 61(2), 228–239. https://doi.org/10.1080/14703297.2023.2190148

Creswell, J. W., & Creswell, J. D. (2018). Research designs: Qualitative, quantitative, and mixed methods approaches (5th ed.). Sage Publications.

Dolgui, A., & Ivanov, D. (2020). Exploring supply chain structural dynamics: New disruptive technologies and disruption risks. International Journal of Production Economics, 229, 107886. https://doi.org/10.1016/j.ijpe.2020.107886

Du Plooy-Cilliers, F., Davis, C., & Bezuidenhout, R. (2014). Research matters. Juta.

Gamage, K. A., Silva, E. K. D., & Gunawardhana, N. (2020). Online delivery and assessment during COVID-19: Safeguarding academic integrity. Education Sciences, 10(11), 301. https://doi.org/10.3390/educsci10110301

García-Villegas, M., Franco-Pérez, N., & Cortés-Arbeláez, A. (2016). Perspectives on academic integrity in Colombia and Latin America. In T. Bretag (Ed.), Handbook of academic integrity (1st ed.), pp. 161–185. Springer.

Ghias, K., Lakho, G. R., Asim, H., Azam, I. S., & Saeed, S. A. (2014). Self-reported attitudes and behaviours of medical students in Pakistan regarding academic misconduct: A cross-sectional study. BMC Medical Ethics, 15(1), 1–14. https://doi.org/10.1186/1472-6939-15-43

Guerrero-Dib, J. G., Portales, L., & Heredia-Escorza, Y. (2020). Impact of academic integrity on workplace ethical behaviour. International Journal for Educational Integrity, 16(1), 1–18. https://doi.org/10.1007/s40979-020-0051-3

Habib, S., & Hamadneh, N. N. (2021). Impact of perceived risk on consumers technology acceptance in online grocery adoption amid Covid-19 pandemic. Sustainability, 13(18), 10221. https://doi.org/10.3390/su131810221

Holden, O. L., Norris, M. E., & Kuhlmeier, V. A. (2021, July). Academic integrity in online assessment: A research review. Frontiers in Education, 6(639814), 1. https://doi.10.3389/feduc.2021.639814

Hopkin, P., & Thompson, C. (2022). Fundamentals of risk management (6th ed.). Institute of Risk Management. ISBN 978 1 3986 0286 1.

Ivascu, L., & Cioca, L. I. (2014). Opportunity risk: Integrated approach to risk management for creating enterprise opportunities. Advances in Education Research, 49(1), 77–80.

Jones, C. R., & Bergen, B. K. (2024). Lies, damned lies, and distributional language statistics: Persuasion and deception with large language models. arXiv preprint arXiv:2412.17128. https://doi.org/10.48550/arXiv.2412.17128

Khalil, M., & Er, E. (2023). Will ChatGPT get you caught? Rethinking of plagiarism detection. In International Conference on Human-Computer Interaction (pp. 475–487). Springer Nature Switzerland.

Kivunja, C., & Kuyini, A. B. (2017). Understanding and applying research paradigms in educational contexts. International Journal of Higher Education, 6(5), 26–41. https://doi.org/10.5430/ijhe.v6n5p26

Kleinheksel, A. J., Rockich-Winston, N., Tawfik, H., & Wyatt, T. R. (2020). Demystifying content analysis. American Journal of Pharmaceutical Education, 84(1), 7113. https://doi.org/10.5688/ajpe7113

Kudina, O., & van de Poel, I. (2024). A sociotechnical system perspective on AI. Minds and Machines, 34(3), Article 21. https://doi.org/10.1007/s11023-024-09680-2

Lembani, R., Gunter, A., Breines, M., & Dalu, M. T. B. (2020). The same course, different access: The digital divide between urban and rural distance education students in South Africa. Journal of Geography in Higher Education, 44(1), 70–84.

Macfarlane, B., Zhang, J., & Pun, A. (2014). Academic integrity: A review of the literature. Studies in Higher Education, 39(2), 339–358. https://doi.org/10.1080/03075079.2012.709495

Malik, M. A., Mahroof, A., & Ashraf, M. A. (2021). Online university students’ perceptions on the awareness of, reasons for, and solutions to plagiarism in higher education: The development of the AS&P model to combat plagiarism. Applied Sciences, 11(24), 12055. https://doi.org/10.3390/app112412055

Maree, K. (Ed). 2016. First steps in research (2nd ed.). Van Schaik Publishers.

McHaney, R., Cronan, T. P., & Douglas, D. E. (2016). Academic integrity: Information systems education perspective. Journal of Information Systems Education, 27(3): 153–158.

Miles, P. J., Campbell, M., & Ruxton, G. D. (2022). Why students cheat and how understanding this can help reduce the frequency of academic misconduct in higher education: A literature review. Journal of Undergraduate Neuroscience Education, 20(2), A150–A160. https://doi.org/10.59390/LXMJ2920

Moya, B., Eaton, S. E., Pethrick, H., Hayden, K. A., Brennan, R., Wiens, J., McDermott, B., & Lesage, J. (2023). Academic integrity and artificial intelligence in higher education contexts: A rapid scoping review protocol. Canadian Perspectives on Academic Integrity, 7(3). http://doi.org/10.55016/ojs/cpai.v7i3/78123

Mthiyane, Z. Z., van der Poll, H. M., & Tshehla, M. F. (2022). A framework for risk management in small medium enterprises in developing countries. Risks, 10(9), 173. https://doi.org/10.3390/risks10090173

Muhammad, A., Shaikh, A., Naveed, Q. N., & Qureshi, M. R. N. (2020). Factors affecting academic integrity in E-learning of Saudi Arabian Universities. An investigation using Delphi and AHP. Ieee Access, 8:16259–16268. https://doi.org/10.1109/ACCESS.2020.2967499

Mutongoza, B. H., & Olawale, B. E. (2022). Safeguarding academic integrity in the face of emergency remote teaching and learning in developing countries. Perspectives in Education, 40(1), 234–249. http://dx.doi.org/10.18820/2519593X/pie.v40.i1.14

Nabaho, L., & Turyasingura, W. (2019). Battling academic corruption in higher education: Does external quality assurance (EQA) offer a ray of hope? Higher Learning Research Communications, 9(1). https://doi.org/10.18870/hlrc.v9i1.449

Najjar, N., Rouphael, M., Bitar, T., & Hleihel, W. (2025). The rise and drop of online learning: Adaptability and future prospects. Frontiers in Education, 10, 1522905. https://doi.org/10.3389/feduc.2025.1522905

Napkin AI. (2025). Napkin AI [Artificial intelligence system]. https://www.napkin.ai

Ngcamu, B. S., & Mantzaris, E. (2023). Policy enforcement, corruption and stakeholder interference in South African universities. Journal of Transport and Supply Chain Management, 17, 814. https://doi.org/10.4102/jtscm.v17i0.814

Nnorom, I. C. (2025). Ethical considerations in artificial intelligence and academic integrity: Balancing technology and human values. In AI and ethics, academic integrity and the future of quality assurance in higher education, pp. 93–100. Sterling Publishers.

OpenAI. (2025). ChatGPT o3 [Large language model]. https://openai.com/index/introducing-o3-and-o4-mini/

Perkins, M. (2023). Academic integrity considerations of AI large language models in the post-pandemic era: ChatGPT and beyond. Journal of University Teaching & Learning Practice, 20(2), 7. https://doi.org/10.53761/1.20.02.07

Pike, R. K., Buck, L. A., Tsoukkas, E., & Bell, E. (2025). From collaboration to contract cheating: Exploring staff and student perceptions of the grey areas of academic outsourcing. Assessment & Evaluation in Higher Education, 50(8), 1–21. https://doi.org/10.1080/02602938.2025.2539291

Pratschke, B. M. (2024). Generative AI and education: Digital pedagogies, teaching innovation and learning design. Springer Nature Switzerland AG. https://doi.org/10.1007/978-3-031-67991

Rane, N., Shirke, S., Choudhary, S. P., & Rane, J. (2024). Education strategies for promoting academic integrity in the era of artificial intelligence and ChatGPT: Ethical considerations, challenges, policies, and future directions. Journal of ELT Studies, 1(1), 36–59. https://doi.org/10.48185/jes.v1i1.1314

Ray, P. P. (2023). ChatGPT: A comprehensive review on background, applications, key challenges, bias, ethics, limitations and future scope. Internet of Things and Cyber-Physical Systems, 3, 121–154. https://doi.org/10.1016/j.iotcps.2023.04.003

Rodrigues, M., Silva, R., Borges, A. P., Franco, M., & Oliveira, C. (2024). Artificial intelligence: Threat or asset to academic integrity? A bibliometric analysis. Kybernetes, 54(5), 2939–2970. https://doi.org/10.1108/K-09-2023-1666

Rumyantseva, N. L. (2005). Taxonomy of corruption in higher education. Pedagogy Journal of Education, 80(1), 81–92. https://doi.org/10.1207/S15327930pje8001_5

Sadeghi, M. (2019). A shift from classroom to distance learning: Advantages and limitations. International Journal of Research in English Education, 4(1), 80–88. https://doi.org/10.29252/ijree.4.1.80

Sevnarayan, K., & Maphoto, K. B. (2024). Exploring the dark side of online distance learning: Cheating behaviours, contributing factors, and strategies to enhance the integrity of online assessment. Journal of Academic Ethics, 22, 51–70. https://doi.org/10.1007/s10805-023-09501-8

Spiers, J., Morse, J. M., Olson, K., Mayan, M., & Barrett, M. (2018). Reflection/commentary on a past article: Verification strategies for establishing reliability and validity in qualitative research. International Journal of Qualitative Methods, 17(1), 1609406918788237. https://doi.org/10.1177/1609406918788237

Stander, E., & Herman, C. (2017). Barriers and challenges private higher education institutions face in the management of quality assurance in South Africa. South African Journal of Higher Education, 31(5), 206–224. https://doi.org/10.20853/31-5-1481

Sullivan, M., Kelly, A., & McLaughlan, P. (2023). ChatGPT in higher education: Considerations for academic integrity and student learning. Journal of Applied Learning and Teaching, 6(1), 31–40. https://doi.org/10.37074/jalt.2023.6.1.17

Sun, R., Tang, M., Zhou, J., Loan, N. T. T., & Wang, C.-Y. (2025). The dark tetrad as associated factors in generative AI academic misconduct: Insights beyond personal attribute variables. Frontiers in Education, 10. https://doi.org/10.3389/feduc.2025.1551721

Susnjak, T., & McIntosh, T. R. (2024). ChatGPT: The end of online exam integrity?. Education Sciences, 14(6), 656. https://doi.org/10.3390/educsci14060656

Trist, E. L., & Bamforth, K. W. (1951). Some social and psychological consequences of the longwall method of coal-getting. Human Relations, 4(1), 3–38. https://doi.org/10.1177/001872675100400101

Verhoef, A. H., & Coetser, Y. M. (2021). Academic integrity of university students during emergency remote online assessment: An exploration of student voices. Transformation in Higher Education, 6. https://doi.org/10.4102/the.v6i0.132

Wach, E., & Ward, R. (2013). Learning about qualitative document analysis. Institute of Development Studies. https://opendocs.ids.ac.uk/articles/report/Learning_about_Qualitative_Document_Analysis/26442637

Zarzycka, E., Krasodomska, J., Mazurczak-Mąka, A., & Turek-Radwan, M. (2021). Distance learning during the COVID-19 pandemic: Students’ communication and collaboration and the role of social media. Cogent Arts & Humanities, 8(1), 1953228. https://doi.org/10.1080/23311983.2021.1953228

Züll, C. (2016). Open-Ended Questions Version 2.0. GESIS Survey Guidelines. Mannheim: GESIS - Leibniz-Institut für Sozialwissenschaften. https://doi.org/10.15465/gesis-sg_en_002

Dr Godson Chinenye Nwokocha is a Senior Lecturer and Academic Manager for Undergraduate Distance Learning at STADIO Higher Education, South Africa, and a postgraduate guest lecturer in Science and Technology Education at the University of KwaZulu-Natal. With more than a decade of experience in higher education, he offers expertise in academic leadership, digital innovation, and the effective delivery of strategic educational projects across diverse and evolving learning environments.

He leads large-scale academic programmes with a strong focus on curriculum design, programme mapping for industrial relevance, academic quality assurance, and the digital transformation of teaching and learning. His research interests span Open Distance Learning, Instructional and Educational Technologies, Inclusive Education, Indigenous Knowledge Systems, Decolonisation in educational contexts, and climate-change technology adaptation in smallholder farming. He has supervised and continues to supervise postgraduate students in these areas.

Dr Nwokocha is a member of several professional bodies, including the South African Council for Educators (SACE), Epsilon Pi Tau (Delta Beta Chapter), and the Golden Key International Honour Society at UKZN.

Dr Jolanda De Villiers Morkel is a senior academic, researcher, and designer whose work sits at the intersection of higher education, technology, and inclusive learning. As Head of Instructional Design at STADIO Higher Education, she guides institution-wide initiatives that strengthen quality teaching, learning design, and AI-enabled learning innovation. Her research spans conversational agents, cognitive apprenticeship, inclusive assessment, intercultural learning, and design-based pedagogies in the Global South. A qualified architect with two decades of lecturing experience at the Cape Peninsula University of Technology, Jolanda brings deep expertise in studio-based learning and creative practices. She actively contributes to national and international scholarship through conference presentations, collaborative research projects, and AI-policy development. Passionate about widening access and student success, she designs learning environments that are human-centred, responsive, and grounded in contextual realities. Jolanda is committed to shaping ethical, future-ready educational ecosystems that empower diverse students to thrive.

Figure 1 image description: Diagram illustrates research design and methods:

Back to Figure 1.

Figure 2 image description: Diagram illustrates that risk factors lead to emergent risks, behavioural risks, and structural risks. This in turn, results in risk response strategies including training, policies, and tools and practices.

Back to Figure 2.